-

#1 Perplexity AIBest for research — inline citations, Pro Search clarifying questions

-

#2 ChatGPT SearchBest for ecosystem users — search built into ChatGPT conversations

Look, I'll save you 10 minutes of reading if you're in a hurry: Perplexity is the better search engine. ChatGPT Search is the better chatbot that happens to search. They're solving different problems, and the fact that everyone compares them head-to-head is why most of those comparison articles are useless.

But I'm getting ahead of myself.

I spent two weeks running both tools through real queries, not the softball "what's the capital of France" stuff that every other comparison uses. Research queries, coding questions, current events, product comparisons. I tracked response times, checked every citation, and documented every hallucination. What I found was messier than the clean narratives most reviews push.

Perplexity handles 780 million queries a month now. That's up from 230 million barely a year ago, roughly a 240% jump that makes it the fastest-growing search product since, well, Google. ChatGPT Search, meanwhile, is riding the 300-million-user base OpenAI already had. Different growth stories. Different incentives. And those incentives shape how both tools actually work in ways nobody talks about.

Here's what I mean.

The 2026 AI Search Landscape (No Fluff)

The era of "10 blue links" is dying, and that's not hype. It's math. Zero-click searches jumped from 56% to 69% after AI-powered search went mainstream in 2025. More than two-thirds of searches now end without anyone clicking through to a website. Let that sink in.

But here's the thing. AI search has its own problems. Hallucinations are still a real concern. Citation bias is worse than most people realize. And there's a growing backlash from content creators whose work gets scraped, summarized, and served to users who never visit the original page. Some publishers have seen traffic drops of 20% to 90%.

Both Perplexity and ChatGPT Search sit at the center of this mess. They're not just competing with each other. They're reshaping how information flows on the internet. And whether that's a good thing depends a lot on which side of the equation you're sitting on.

Perplexity AI: The Research-First Search Engine

How Pro Search Actually Works

Perplexity's secret weapon isn't the AI model. It's the workflow. When you use Pro Search, it doesn't just fire off a query and hope for the best. It asks clarifying questions first. "Are you looking for consumer reviews or enterprise solutions?" "Do you need recent data or historical analysis?" That extra step means it's researching with context, not guessing what you meant.

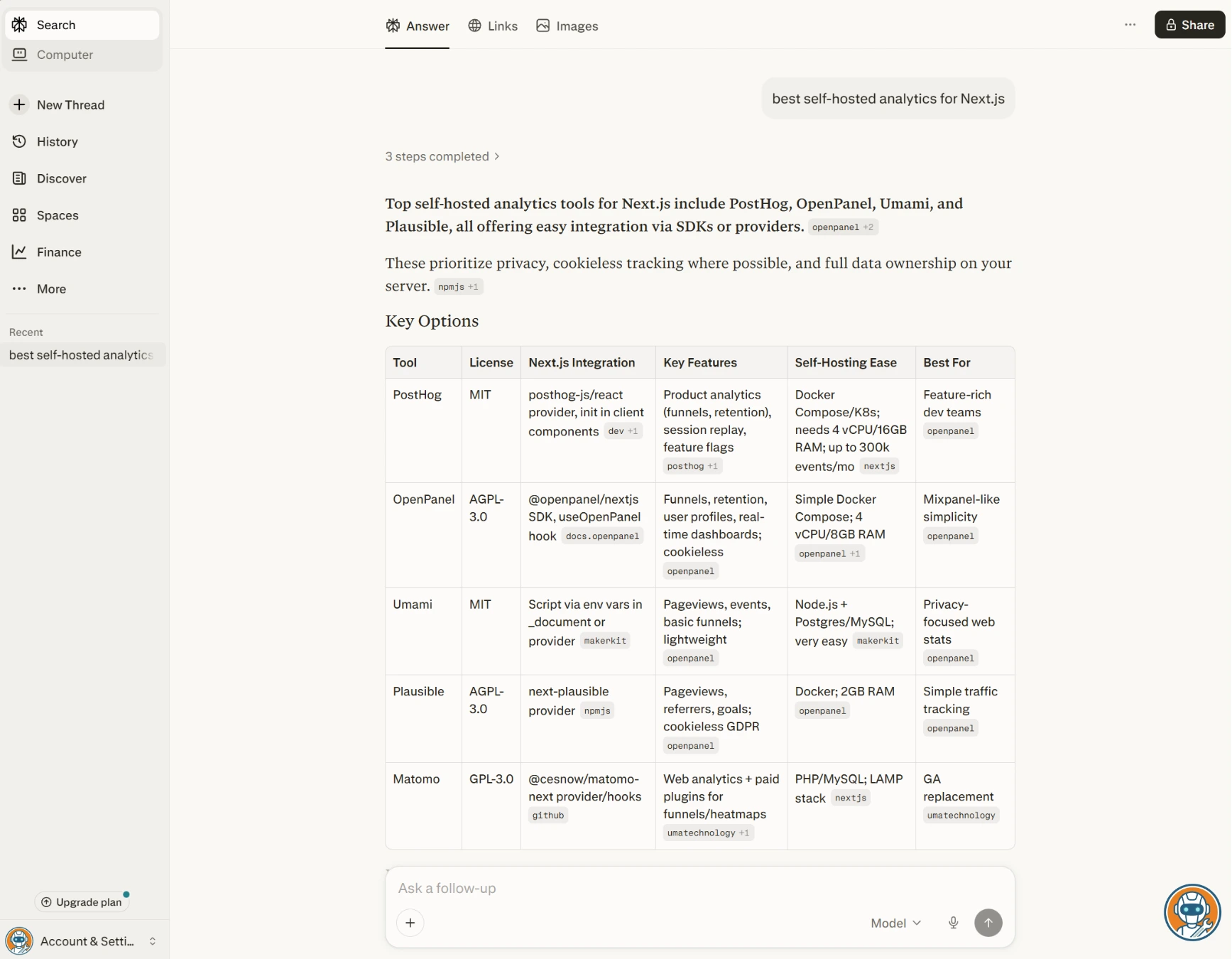

Try a tricky query like "best self-hosted analytics alternative to Google Analytics that works with Next.js." Perplexity asks whether you care about privacy compliance, whether you need real-time dashboards, and your budget range. Then it returns five specific tools with inline source links you can verify. Two of those tools, Umami and Plausible, are lesser-known picks that turn out to be legit recommendations.

ChatGPT Search, given the same query, skipped the clarifying step and gave me a general overview that included Google Analytics itself as a recommendation. Which... is what I was trying to replace.

The Reddit Bias (Why It Loves Forums)

Here's something I didn't expect to find. According to an analysis by Relixir, 46.7% of Perplexity's top cited sources come from Reddit. Almost half. Reddit citations saw a 40x increase between February and April 2025 alone, a spike so dramatic that Reddit actually sued Perplexity over it in October 2025.

A Semrush study of 248,000 Reddit posts confirmed it: Reddit links appear at an average position of 3.4 in Perplexity's answers, meaning they show up early and prominently. Compare that to ChatGPT Search where Reddit links land at position 6.7, buried halfway through the response.

Is this good or bad? Honestly, kind of both. Reddit threads often have the most honest, unfiltered takes on products. But they're also full of anecdotal evidence, outdated advice, and occasional flat-out misinformation. Perplexity treats Reddit like a primary source. And sometimes Reddit is right. Sometimes it really isn't.

Pricing and Hidden Limits

Free tier: unlimited basic searches, limited Pro Search queries per day. Enough for casual use, frustrating for power users.

Pro: $20/month ($200/year). Unlimited Pro Search, access to multiple AI models (GPT-4o, Claude, Mistral), faster responses, unlimited file uploads. This is where it gets genuinely useful. There's also a Max tier at $200/month for unlimited everything, which is overkill for most people but useful if you're running it all day for research work.

If you're a developer: Perplexity's API pricing is separate from the consumer product and runs on a per-request model. The Sonar search tier starts at $5 per 1,000 requests at low context, scaling to $12 at high context. Sonar Pro runs $6-$14 per 1,000 depending on search depth, plus token costs on top. For teams building on Perplexity's API, that adds up fast compared to rolling your own retrieval-augmented generation pipeline. Posts on r/perplexity_ai regularly debate whether the API is worth it versus self-hosting an open-source stack with Mistral or Llama models.

Pro Search asks clarifying questions before researching, dramatically improving result quality.

Users who want a single integrated tool for chat, images, and search in one place.

- Pro Search clarifying questions dramatically improve result quality

- Inline citations on every claim — easy to verify

- 780M monthly queries — the fastest-growing search product around

- Multi-model access on Pro (GPT-4o, Claude, Mistral)

- Surfaces niche forum discussions that Google buries

- 46.7% of top citations from Reddit — heavy forum bias

- 37% failure rate on news source citation accuracy (Tow Center study)

- No meaningful privacy controls for search queries

- Free tier Pro Search limits hit fast during research sessions

- Perplexity referral program ($15 cash) ended November 2025

ChatGPT Search: OpenAI's Walled Garden

Conversational Memory vs. Actual Research

Here's the thing about ChatGPT Search that most reviews get wrong: it's not really a search engine. It's a search feature bolted onto a conversational AI. And that distinction matters more than you'd think.

The strength: context. When you're mid-conversation with ChatGPT about, say, planning a trip to Portugal, and you ask "what's the weather like in Lisbon in March?", it knows you're trip planning. It pulls weather data, links to tourism sites, and suggests packing tips, all within the same conversation thread. Perplexity would treat that as an isolated query. ChatGPT treats it as part of your ongoing thread.

The weakness: it's lazy about citing. ChatGPT Search shows source links in a sidebar panel, not inline with the claims. So when it tells you "Lisbon averages 17°C in March," you have to dig through the sidebar to figure out where that number came from. Perplexity puts the [1][2][3] right next to each claim. For research-heavy work where verification matters, that difference is everything.

Quick note: "SearchGPT" was actually a temporary prototype. OpenAI folded it into ChatGPT as a built-in search feature. As of February 2025, ChatGPT search is available to all users, including the free tier. Plus subscribers ($20/month) get more searches and priority access. If you've already got ChatGPT Plus for writing or coding, search is included at no extra cost.

The Legacy Media Bias (Why It Favors Big Publishers)

This is the part that genuinely bothers me.

OpenAI has signed licensing deals with News Corp, The Atlantic, Vox Media, AP, and Axios, among others. These deals are reportedly worth hundreds of millions. And when you use ChatGPT Search, guess whose content tends to show up most prominently? The publishers OpenAI is paying.

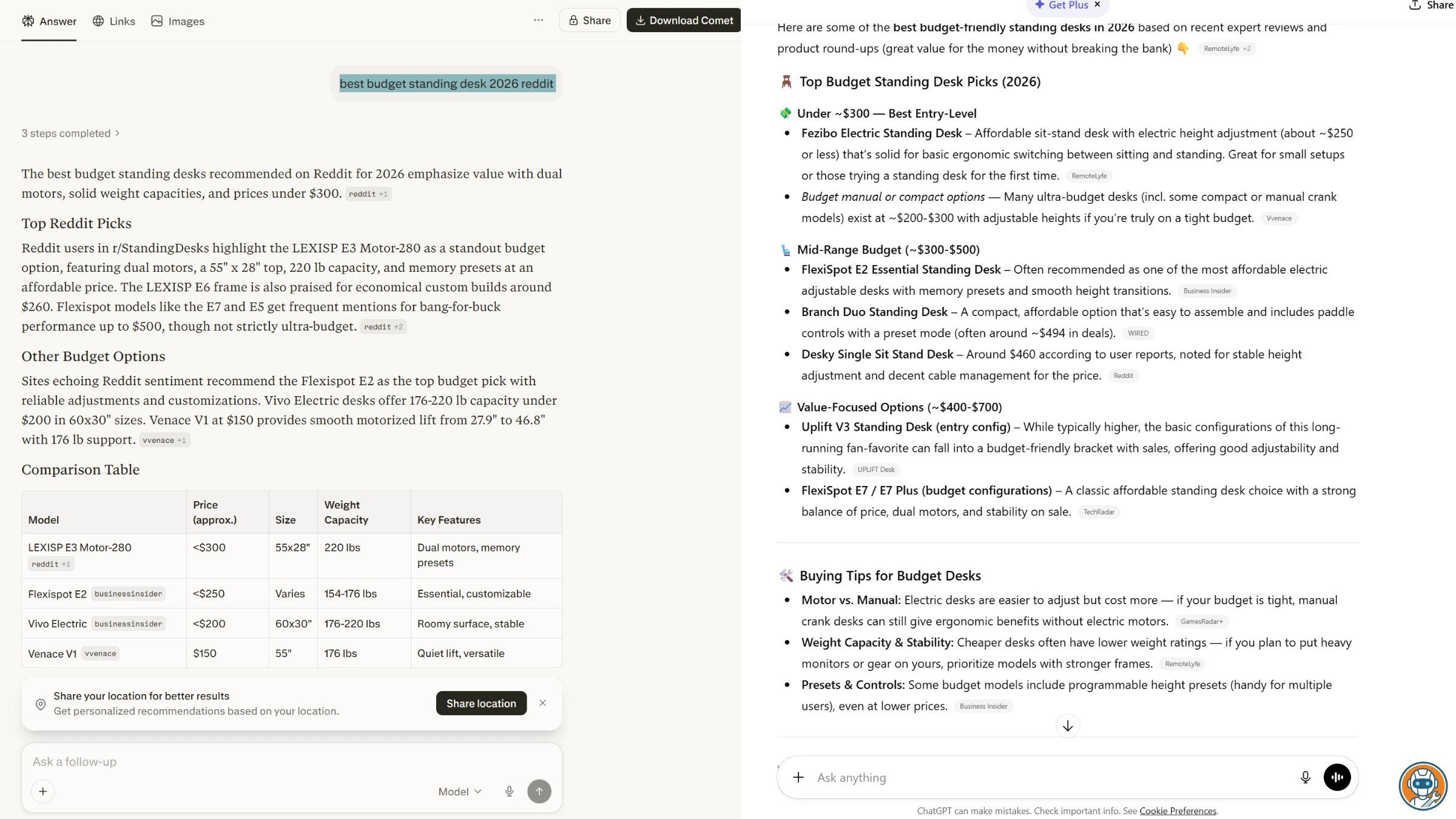

Here's a telling example. Search "best budget standing desk 2026" in both tools. Perplexity cites a Reddit thread, a Wirecutter article, two independent review blogs, and a YouTube video. ChatGPT Search cites The Verge (Vox Media), AP, a New York Times affiliate roundup, and one generic Amazon link. The independent blogs that actually review standing desks? Nowhere to be found.

Is this provably biased? I can't say the licensing deals directly cause citation bias in every query. But the structural incentive is obvious: OpenAI pays these publishers, and their content surfaces prominently. Draw your own conclusions.

Conversational context means follow-up queries build on what you have been discussing.

Researchers who need inline citations and verifiable source links next to every claim.

- Conversational context — knows what you've been discussing

- Included with ChatGPT free tier (basic) and Plus ($20/mo)

- Integrated with ChatGPT's full capabilities (code, images, plugins)

- Smooth UX if you're already a ChatGPT user

- Citations in sidebar, not inline — harder to verify specific claims

- Licensing deals with News Corp, Vox, AP raise bias concerns

- Worse citation accuracy than Perplexity (Tow Center study)

- Not designed as a search engine — research is secondary to chat

- No way to opt out of query data being used (beyond toggling training off)

Head-to-Head: Real Performance Data

Every tool, same queries. I focused on the metrics that actually mattered during testing, not vibes, not "it felt faster." Actual data.

The accuracy gap is real but not where most people expect it. The Tow Center for Digital Journalism at Columbia tested 200 citation queries across eight AI search engines in early 2025. Perplexity had the lowest failure rate at 37% incorrect citations. ChatGPT Search answered all 200 queries but was completely correct only 28% of the time and completely wrong 57% of the time. Worse, ChatGPT used hedging language in only 15 of its 134 incorrect responses, meaning it was confidently wrong almost every time. Users on r/artificial have been compiling their own hallucination logs, and the community numbers roughly match what Columbia found.

| Feature | Perplexity Pro | ChatGPT Search |

|---|---|---|

| Monthly Cost | $20/mo | $20/mo (Plus) / Free |

| Citation Style | Inline [1][2][3] next to claims | Sidebar panel links |

| Source Bias | Reddit & forums (46.7% of top sources) | Licensed media (News Corp, Vox, AP) |

| Avg. Reddit Link Position | 3.4 (early, prominent) | 6.7 (buried mid-answer) |

| News Citation Accuracy | 63% correct (Tow Center) | Below 63% (Tow Center) |

| Speed (Complex Query) | ~3-5s (with Pro Search clarification) | ~4-8s (conversational generation) |

| Best For | Deep research & fact-checking | Ecosystem integration & follow-ups |

| Free Tier | ✓ Limited Pro searches | ✓ Basic web search included |

| Action | Try Perplexity → | Try ChatGPT → |

The "AI Tax": What Creators Hate About Both

I'm calling this the "AI Tax" because that's what it feels like if you're a creator.

Both Perplexity and ChatGPT Search scrape web content, summarize it, and serve the answer directly to users, who then have zero reason to click through to the original site. For bloggers, small businesses, and independent reviewers (like, you know, us), this means your content drives someone else's product while you get nothing.

The numbers are ugly. Zero-click searches jumped from 56% to 69% after AI search went mainstream. Business Insider's organic search traffic dropped 55% between April 2022 and April 2025. DMG Media reported an 89% decline in click-through rates. The IAB proposed the "AI Accountability for Publishers Act" in February 2026 specifically to address this.

And here's the affiliate angle nobody mentions: when Perplexity summarizes a product comparison from an affiliate site, it strips the affiliate links. The creator did the testing, wrote the review, and took the photos. Perplexity gives users the conclusion without the click. The creator gets nothing.

If you're a site owner trying to track how your brand appears in AI search results, and whether these tools are sending any traffic at all, tools like Indexly can monitor your visibility across Perplexity, ChatGPT, and Google's AI Mode. Worth knowing about if this stuff keeps you up at night.

Privacy: Who Owns Your Search Data?

Real-time AI search requires sending your queries, plus conversational context, to external servers. That's fundamentally different from a static Google search. These tools see what you're searching, how you phrase it, and what you do with the results.

ChatGPT: your conversations can be used to train future models unless you toggle off "Improve the model for everyone" in Data Controls. Even then, OpenAI retains conversations for 30 days for "safety monitoring." Their privacy policy is... long. And vague in the ways that matter.

Perplexity: no equivalent opt-out for search queries. Their privacy policy states they collect query data, usage patterns, and device information. For a tool positioning itself as a Google alternative, the privacy story isn't great.

Neither tool is private by default, a point r/ChatGPT users raise frequently. If you're doing sensitive research (competitive analysis, legal questions, medical queries), consider running your searches through a VPN at minimum. We compared the major options in our best VPNs 2026 guide, and NordVPN's clean IP addresses cause the fewest CAPTCHA issues with both Perplexity and ChatGPT based on user reports. And if you want to go a step further and remove your personal data from the brokers feeding these AI tools, check our Incogni vs Privacy Bee comparison.

Verdict: Which AI Search Engine Wins?

Perplexity wins for search. It was built as a search engine from the ground up, and it shows. Pro Search's clarifying questions, inline citations, and multi-model access make it genuinely better at finding and verifying information. The r/perplexity_ai community largely agrees. The Reddit bias is real, but at least you can see exactly where every claim comes from and decide for yourself.

ChatGPT Search wins for ecosystem users. If you're already paying $20/month for ChatGPT Plus and using it for writing, coding, AI video workflows, and image generation, the built-in search is a nice bonus. You won't switch tabs, and the conversational context makes follow-up queries smoother. But it's a search feature, not a search engine, and the citation transparency is noticeably worse.

Here's my contrarian take: ChatGPT Search isn't actually a search engine — it's a knowledge launderer. It curates answers that heavily favor OpenAI's licensed media partners while making it feel like you're getting "the web." You're not getting the raw internet. You're getting a filtered version that benefits the companies OpenAI is paying. If you want actual source diversity, Perplexity is the better bet, Reddit bias and all.

For coding-specific searches, honestly both are fine but dedicated tools are better. Check out our best vibe coding tools roundup for tools that go way beyond search.

And for the creators reading this whose content is getting scraped by both platforms, I get it. The "AI Tax" is real, it's getting worse, and neither tool has a good answer for it yet. All I can say is: the IAB legislation is coming, and the conversation is shifting. It just isn't shifting fast enough.