-

#1 CursorBest for multi-file editing, agent mode, power users

-

#2 GitHub CopilotBest value — works in your existing IDE for half the price

-

#3 Use BothCopilot for completions + Cursor for heavy agent work

Here's the thing. Every "Copilot vs Cursor" article I've read this year gets the framing wrong. They compare features side by side like a spec sheet and then give you a wishy-washy "it depends." Which is technically correct and practically useless.

So let me skip that and tell you what actually matters: Copilot costs $10/month. Cursor costs $20/month. That's double. And the question everyone's actually asking isn't "which has better features." It's whether that extra $10 buys you anything you'll actually notice at 2 AM when your refactor is breaking across 12 files. The r/vscode community debates this weekly.

And honestly? After digging into both (the pricing fine print, the agent mode capabilities, the Reddit complaints) the answer is surprising. Not because one is clearly better, but because they're not even competing for the same job anymore.

One's an autocomplete engine on steroids. The other's trying to be your junior dev. That distinction changes everything about how you should think about this decision. If you've already got a handle on AI coding in general, we covered the broader space in our vibe coding tools roundup, including Lovable, Bolt, and the rest. This article is specifically about the two tools that matter most if you actually write code for a living.

The Architecture Question Nobody Asks

Before we get into features, there's a fundamental difference that shapes everything else: Copilot is an extension. Cursor is an editor.

Copilot plugs into whatever IDE you already use: VS Code, JetBrains, Visual Studio, Xcode, even Neovim. It adds AI on top of your existing workflow. Your keybindings, your extensions, your themes, all untouched. It's the path of least resistance, and for a lot of developers that's exactly what they want.

Cursor is a fork of VS Code that was built from scratch around AI. The AI isn't bolted on; it controls the editing experience. That's why its multi-file editing is better (it can coordinate changes across your entire project in ways an extension simply can't), but it also means you're locked into their editor. No JetBrains. No Visual Studio. No Xcode. If VS Code isn't your thing, Cursor isn't an option.

This matters more than any feature comparison. If you're on IntelliJ or PyCharm, stop reading and go with Copilot. Seriously. Cursor doesn't support JetBrains and has no plans to. And since Copilot added agent mode to JetBrains in February 2026, it's not just autocomplete anymore. You get the full agentic experience.

Still here? Cool. Let's dig into each tool.

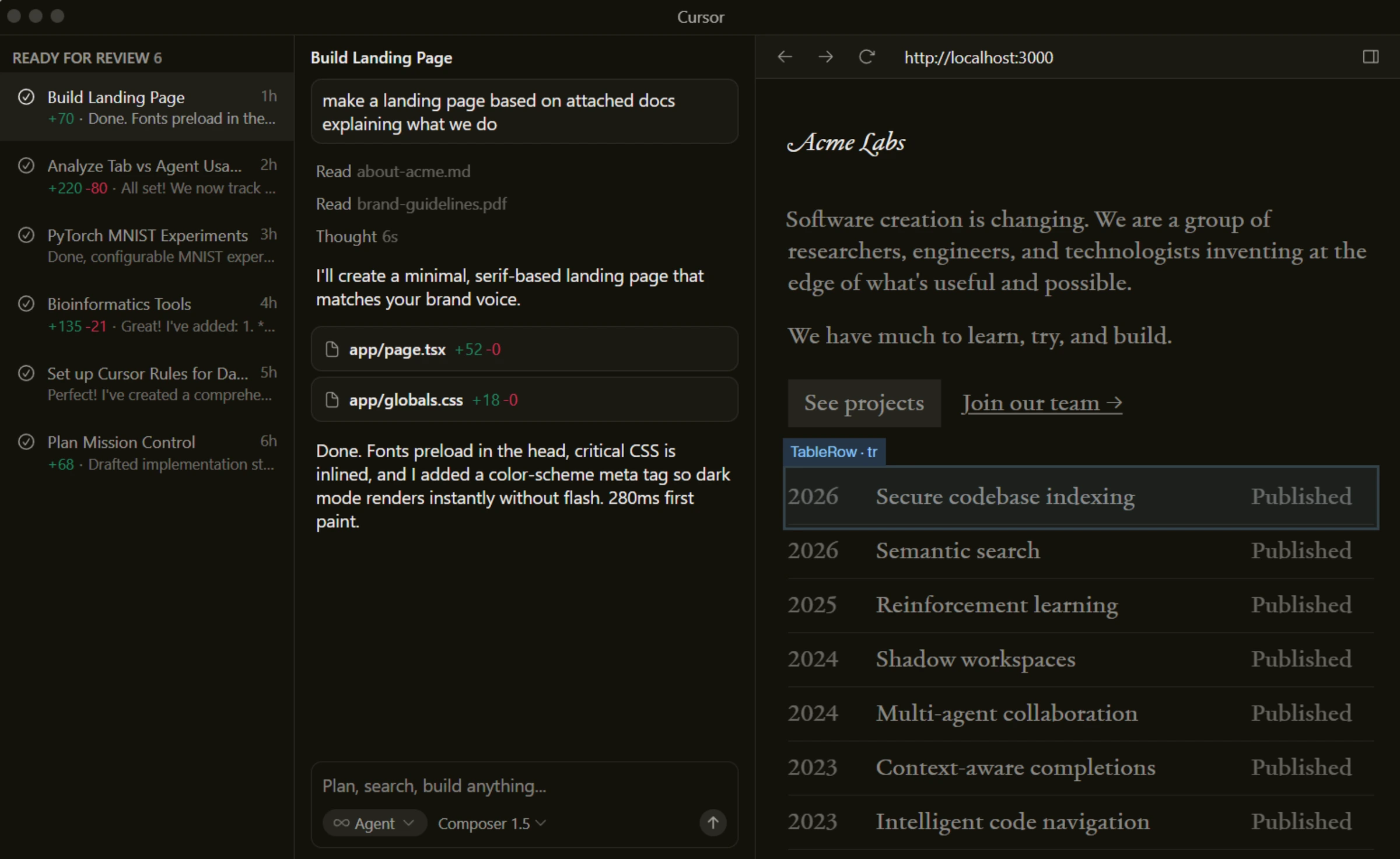

Cursor — The AI-Native IDE

I need to start with the elephant in the room: Cursor had a pricing crisis in June 2025. They switched from a flat 500-request model to usage-based credits without telling anyone properly. Users on Reddit reported their effective request count dropping from ~500 to roughly 225 on the same $20/month plan. CEO Michael Truell apologized publicly. Refunds were issued. But the trust damage was real, and the r/cursor subreddit still brings it up constantly.

That said.

When Cursor works, it works really well. The Tab completion is fast, with sub-200ms predictions that feel native (they use their own fine-tuned model for this, not a third-party API). The codebase indexing is the real differentiator though. Cursor chunks your entire project using tree-sitter, converts it into vector embeddings, and updates incrementally as you code. The result? When you ask it to "add authentication middleware to all API routes," it actually knows what all your routes look like. Copilot guesses. Cursor knows.

The agent capabilities have gotten wild since the v2.5 update. Background agents run in isolated VMs while you keep coding. Think of it like spinning up a junior dev who works on a separate task in the background and comes back with a PR. Sub-agents can spawn their own sub-agents now (yes, a tree of coordinated AI agents working on your codebase). And BugBot, Cursor's automated PR reviewer, catches issues before merge with a 76% resolution rate. Over 35% of BugBot's auto-fix suggestions get merged directly.

The model selection is solid too. Claude Sonnet 4.6 with up to 1M tokens in beta, GPT-4.1, Gemini 3 Pro, xAI's Grok models, plus Cursor's own fine-tuned models. And if you bring your own API key, requests go directly to the provider without counting against your Cursor limits, though the catch is their custom models (the ones powering Agent and Edit) aren't available via BYOK.

Background agents run in isolated VMs with sub-agents, and BugBot auto-reviews PRs at 76% resolution.

Developers on JetBrains, Visual Studio, or Xcode — Cursor only supports its VS Code fork.

- Multi-file editing is a genuine step above Copilot — coordinates changes across 15+ files

- Background agents run in isolated VMs while you keep working on other stuff

- Sub-200ms Tab completions using Cursor's own fine-tuned model

- Full codebase indexing via embeddings — AI actually understands your project structure

- BugBot auto-reviews PRs with 76% resolution rate — catches real bugs

- VS Code fork only — no JetBrains, Visual Studio, or Xcode support

- June 2025 credit switch dropped effective requests from ~500 to ~225 without warning

- Heavy Claude model usage burns through the $20 credit pool in 2-3 weeks

- Agent and Edit features don't work with BYOK — locked to Cursor's infrastructure

- Sluggish on files over 20,000 lines — initial indexing spikes CPU on large repos

GitHub Copilot — The $10 Workhorse

Look, the value argument for Copilot is hard to argue with. Ten dollars a month. Works inside whatever editor you already use. No migration, no new keybindings to learn, no explaining to your team why you switched to a VS Code fork. For a lot of developers, especially those on JetBrains IDEs, there's nothing to discuss here.

Copilot's inline completions are fast and reliable for the bread-and-butter stuff: boilerplate code, common patterns, function implementations where the intent is obvious. It's not as context-aware as Cursor for complex multi-file work, but for "write this Express route" or "implement this React component," it gets it right most of the time. And the "Next Edit Suggestions" feature, where Copilot predicts where you'll edit next and pre-fills the change, is surprisingly useful once you get used to it.

The model selection got much broader in 2026. On the Pro+ tier ($39/month), you get access to Claude Opus 4.6, GPT-5.2-Codex, Gemini 3 Pro, o3, and more. Even the base Pro tier at $10 gets you 300 premium model requests per month, though that limit is the biggest pain point. Reddit threads describe burning through 20%+ of the monthly quota in a single work day.

But here's what most comparison articles miss: Copilot's coding agent. You can assign a GitHub Issue to Copilot, and it autonomously clones your repo, sets up the environment in a cloud VM, writes the code, runs security scans, and opens a draft PR. All from a GitHub Issue comment, no IDE needed. For straightforward bug fixes and small features, early adopters report it saving 20-30 minutes per task. The catch is that each agent run costs 3 premium requests, and Reddit threads describe the quality as "not as capable as Cursor for complex tasks."

One thing I keep coming back to: Copilot's MCP support replaced the old Extensions system (which was deprecated in November 2025). The MCP Registry gives you a curated list of integrations, and since MCP is an open standard, the same protocol works across Copilot, Claude Code, and other tools. That's a bet on interoperability that Cursor's plugin system doesn't quite match yet.

Works in every major IDE at half the price, with a coding agent that turns GitHub Issues into draft PRs.

Power users who need Cursor-level multi-file orchestration and background agents.

- Works in VS Code, JetBrains, Visual Studio, Xcode, Neovim, Eclipse — no editor lock-in

- $10/month for Pro — the best value in AI coding right now

- Coding agent autonomously handles GitHub Issues → draft PRs (no IDE needed)

- Agent mode now works in JetBrains (Feb 2026) — the only option for IntelliJ/PyCharm users

- MCP support with open-standard registry — future-proof extensibility

- 300 premium requests/month on Pro — power users burn through this in a week

- Multi-file editing (Copilot Edits) isn't as coordinated as Cursor's Composer

- Premium model multipliers are confusing — Claude Opus costs 3x, o3 costs 10x from your quota

- Agent mode quality lags behind Cursor for complex, multi-file refactors

- VS Code gets features first — JetBrains and Xcode support always lags behind

Agent Mode: The Real Battleground

Forget autocomplete. That's table stakes in 2026. The real question is which tool can independently handle multi-step, multi-file tasks. And this is where the gap is widest.

Cursor's agent ecosystem is more mature. Background agents work in isolated VMs, which means they can run terminal commands, install dependencies, and test code without touching your local environment. The sub-agent architecture (agents spawning agents) is novel. Demos show it decomposing a "refactor the auth system" task into four parallel sub-tasks and coordinating the results. Cloud agents extend this to Slack, mobile, and the web, so you can kick off a task from your phone and review the PR later. And BugBot running automated reviews on every PR is the kind of workflow automation that actually saves time daily.

Copilot's approach is different. The coding agent works through GitHub Issues: assign an issue to Copilot, and it spins up a cloud VM, figures out the codebase, writes the fix, and opens a PR. It's more constrained than Cursor's agents but also more predictable. The agent mode inside VS Code (and now JetBrains) handles multi-file tasks, but the quality (based on SWE-bench benchmarks and Reddit feedback) is a step below Cursor's Composer. Copilot scored higher on SWE-bench accuracy (56.5% vs 51.7%), but Cursor was significantly faster (63 seconds per task vs 90 seconds). Speed matters when you're iterating.

Honestly? Cursor's agent feels like a junior dev who moves fast and knows your codebase. Copilot's agent feels like a capable contractor who needs more specific instructions. Both are useful, just for different kinds of work.

The Pricing Nobody Gets Right

Every article says "Copilot $10, Cursor $20, done." That's the sticker price. Here's the real story.

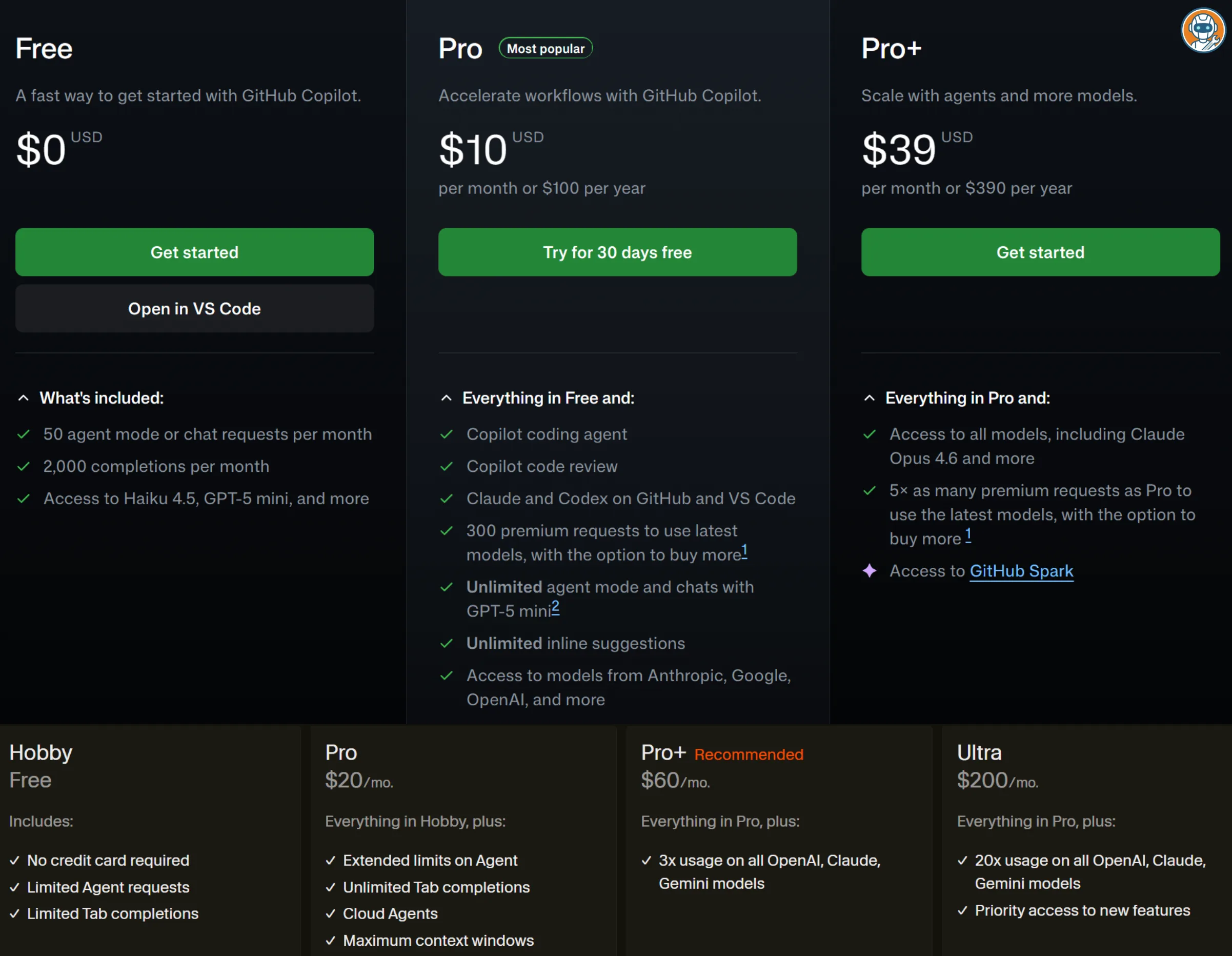

GitHub Copilot — Full Tier Breakdown

- Free: 2,000 completions + 50 premium requests/month. Fine for learning, not for work.

- Pro, $10/month: Unlimited completions, 300 premium requests. The sweet spot for most individual devs.

- Pro+, $39/month: 1,500 premium requests, access to all models (Claude Opus 4.6, o3, GPT-5.2-Codex). The power user tier.

- Business, $19/user/month: 300 premium requests per seat. Org management, policy controls.

- Enterprise, $39/user/month: 1,000 premium requests, knowledge bases, custom models.

The gotcha: model multipliers. A 1x model (Claude Sonnet, GPT-5.2-Codex) costs 1 premium request. But Claude Opus is 3x, and o3 is up to 10x. So your 300 monthly requests could be 100 Opus interactions or 30 o3 calls. Overage is $0.04 per request, which sounds cheap until you realize a single Opus chat costs $0.12 at the 3x multiplier. I've seen r/programming threads where devs blew through their Pro quota in 3 days without realizing their model choice was burning 3x faster.

Cursor — Full Tier Breakdown

- Hobby (Free): 50 premium requests + 500 free-model requests/month. Barely enough to evaluate the tool.

- Pro, $20/month: Unlimited Tab completions, $20 credit pool for premium models. The standard dev plan.

- Pro+, $60/month: 3x the premium model credits.

- Ultra, $200/month: 20x credits, priority access to new features.

- Teams, $40/user/month: Shared chats, SSO, RBAC, centralized billing.

- Enterprise: Custom pricing. SCIM, audit logs, pooled credits.

The gotcha: that $20 credit pool varies wildly depending on which model you use. About 225 Claude Sonnet requests. Around 500 GPT requests. About 550 Gemini requests. If you're a Claude power user (and honestly, for coding, you should be), you're looking at roughly 225 meaningful interactions per month. Heavy users report blowing through that in 2-3 weeks.

Here's the math that nobody does: Copilot Pro at $10/month gives you 300 premium requests with 1x models. Cursor Pro at $20/month gives you ~225 Claude Sonnet requests. So Copilot actually gives you more interactions for less money on comparable models. Cursor's edge is in the quality of those interactions: the multi-file editing and agent features produce better output per request. Whether that tradeoff is worth double the price depends entirely on how complex your daily coding work is.

Full Comparison

| Feature | GitHub Copilot | Cursor |

|---|---|---|

| Price (Individual) | $10/mo (Pro) | $20/mo (Pro) |

| Free Tier | 2,000 completions + 50 chat | 50 premium + 500 free-model |

| IDE Support | VS Code, JetBrains, VS, Xcode, Neovim, Eclipse | Cursor only (VS Code fork) |

| Default Model | GPT-4.1 | Claude Sonnet 4.6 / GPT-4.1 |

| Premium Models | 15+ (Claude Opus, GPT-5.2, Gemini 3, o3) | 10+ (Claude, GPT, Gemini, Grok, custom) |

| Multi-File Editing | Copilot Edits (decent) | Composer Agent (excellent) |

| Agent Mode | VS Code, JetBrains, Eclipse, Xcode | Cursor editor only |

| Codebase Indexing | Basic context | Full embeddings (tree-sitter) |

| Background Agents | ✗ | ✓ (isolated VMs, sub-agents) |

| MCP Support | ✓ (open registry) | ✓ (plugins, skills, hooks) |

| BYOK (Own API Key) | ✗ | ✓ (standard models only) |

| JetBrains Support | ✓ (full agent mode) | ✗ |

| Coding Agent (Issues → PRs) | ✓ (GitHub Issues) | ✓ (Cloud Agents, BugBot) |

| SWE-bench Accuracy | 56.5% | 51.7% |

| SWE-bench Speed | 89.91s / task | 62.95s / task |

| Action | Try Copilot → | Try Cursor → |

The Verdict

I'll make this simple.

Use Cursor if: you write code across multiple files daily, you want background agents that work while you do other things, and you're comfortable paying $20/month for noticeably better multi-file editing. Cursor's agent mode and codebase indexing are a clear tier above. If you're a full-stack dev building features that touch 5-15 files at once, the output quality difference pays for itself in saved debugging time.

Use Copilot if: you're on JetBrains or any non-VS-Code IDE (no choice here), you want solid AI completions at $10/month, or you're part of a team already on GitHub Enterprise. Copilot's coding agent (Issues to PRs) is also great for small bug fixes and straightforward tasks. And honestly, for inline completions, it's just as good as Cursor. The gap only shows up when you're doing complex multi-file work.

Use both if: you want the best of both worlds. Copilot handles your quick completions and JetBrains work, Cursor handles the heavy agent tasks. $30/month total. A lot of devs on Reddit swear by this combo, and the workflow actually makes sense.

The counter-intuitive take? Copilot's SWE-bench accuracy is actually higher than Cursor's (56.5% vs 51.7%). But Cursor is faster and produces more complete multi-file diffs. Accuracy on isolated tasks doesn't tell you much about real-world refactoring where context and coordination matter more than getting a single function right. As I wrote in our ChatGPT vs Claude comparison, the "best" tool depends on what you're measuring, and most benchmarks measure the wrong thing.

Neither tool is perfect. Cursor's pricing trust issues are real. Copilot's 300 monthly request cap is painfully restrictive. But if I had to pick one and only one, I'd go with Cursor. The multi-file editing quality difference is something you feel every single day. And for developers who already live in VS Code, the switch cost is close to zero. It's the same editor with better AI. If you're moving to AI-assisted development from scratch, check our best free AI writing tools for a broader look at where AI tools are headed.